Lawyers, AI and Keyser Söze

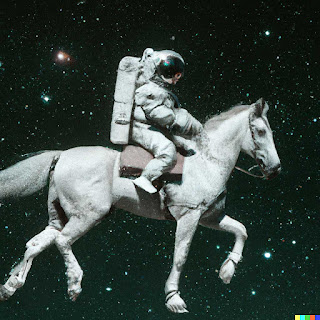

‘And like that … he’s gone …he became a myth, a spook story.’

‘The greatest trick the Devil ever pulled was convincing the world he didn’t exist.’

These great lines from the film ‘The Usual Suspects” came to mind during a recent legal AI panel discussion. The panel were well informed and discussing the provocative theme of whether AI will pose a threat to lawyers. Through their losing control, and should they be afraid?

Nearly everyone on the panel, as well as those in the audience talked of ‘AI making decisions’. Now this was not unusual, go to any number of discussions on AI and they’ll follow this pattern.

For many AI is entirely ephemeral. They know it’s increasingly used in E-discovery and contract automation. Leading them to fear it could be coming for them next.

But they have no mental model or clear language for discussing AI. So there is a lack of clarity, and in the fog of confusion, the myth or spook story of AI as a potential slayer of lawyer’s livelihoods has grown. It’s taken on a mystique, much like a Brother’s Grimm bogeyman. Or Keyser Soze.

Of course, it goes almost without saying that I don’t agree at all. Here is why, and my suggestions for exorcising the myth.

Don’t use the term AI. It covers too wide a range of technologies and is far too nebulous to convey any clear meaning. So it has become a b……t term, and a good indicator along with ‘blockchain’ (outside of proof of work crypto) of pseuds, scenesters and potential scammers. A good leading indicator of this is Silicon Valley’s Y Combinator asking applicants to their remarkably successful program not to use the term. For myself, if there was a way to collectively short the shares of all legal tech firms with AI in the title, I would.

Instead, if it’s Machine Learning, refer to it as Machine Learning or ML If it’s Natural Language processing as used by Siri and other virtual assistants and chatbots, then say so.

As a mental model for Machine learning, think of automated statistics. This is not scary, especially as it’s the machine doing the hard work. You feed it some data. The model looks for statistically significant patterns and so trains itself (which is why quality of data always trumps quantity). Then when you give it new data it looks for those same statistical patterns in it. And it makes predictions about and from the new data.

ML does not make decisions. Ever, ever, ever. ML can only ever make predictions.

From these predictions, such as the likelihood of malignant growths in images, or degree of similarity between two trademarks, humans can make decisions. Or they can delegate the decisions to agents they program to act certain ways based on the ML’s predictions. But such automated tools have no agency other than that given to them by humans. And they certainly have no innate intelligence.

So Machine Learning (or AI if you must) is not a myth or a spook story. It does exist. But it’s certainly not a decision-making devil. Where that happens, it’s within us!